AI Didn’t Kill Stack Overflow, Quora, or Reddit — It Rewired How We Learn

Stack Overflow, Quora, Reddit — what's changing, what's dying, and what happens to collective knowledge when fewer people contribute back.

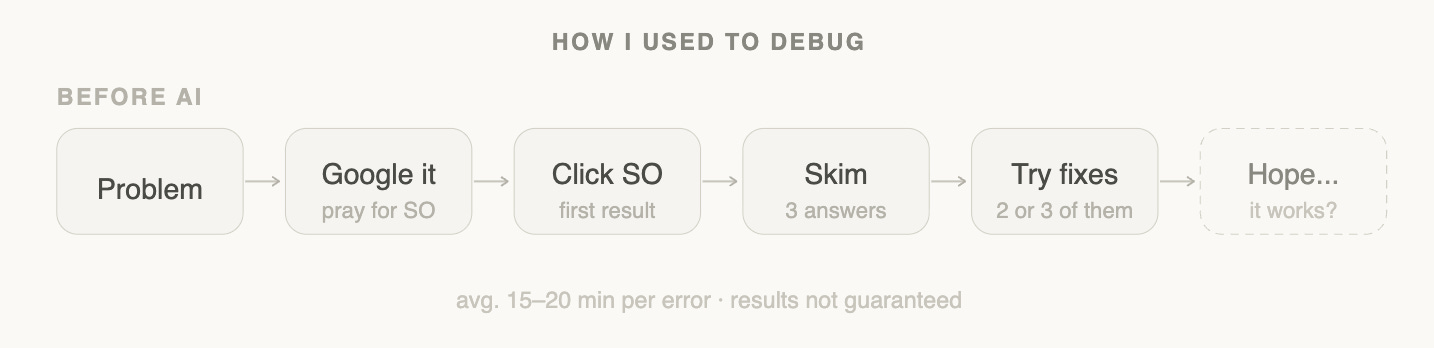

A few years ago, my debugging workflow looked like this:

Open Google → type error → click the first Stack Overflow link → skim answers → try 2–3 fixes → hope something works.

Today? I paste the error into ChatGPT and get a working solution in seconds. No tabs. No scrolling. No “accepted answer from 2016.”

At first glance, it feels like a simple productivity win. But zoom out and something much bigger is happening.

AI isn’t just changing how we find answers. It’s changing whether we ask questions in public at all — and that distinction matters enormously.

The Shift in One Line

Google gave you links. AI gives you answers.

That one sentence sounds obvious until you sit with what it actually means for the infrastructure of knowledge on the internet.

Or put differently:

AI didn’t replace Stack Overflow.

It replaced the need to ask publicly.

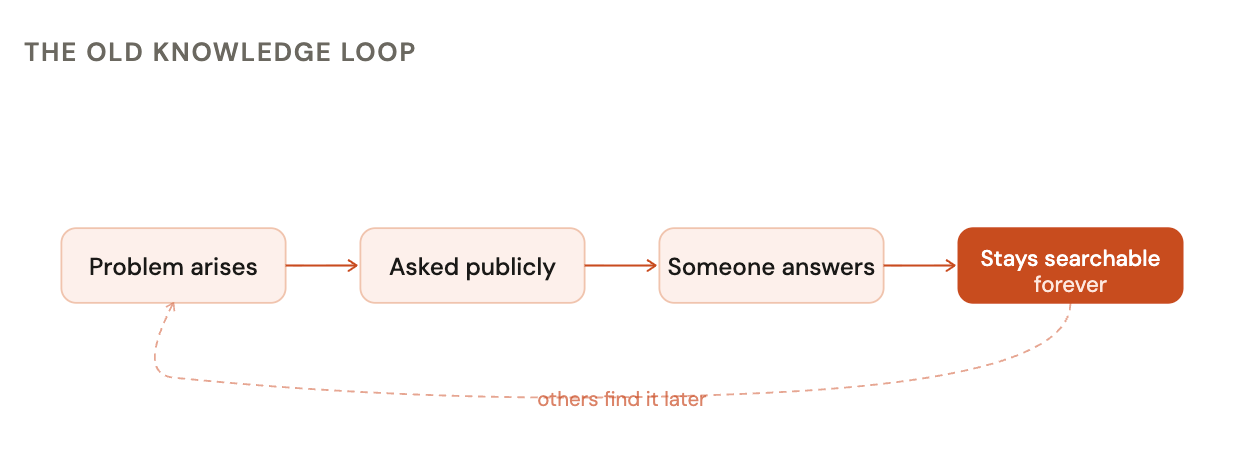

The Old Model: Knowledge as a Public Good

For nearly two decades, the internet ran on a beautifully simple loop:

Someone had a problem

They asked it publicly

Someone else answered

The answer stayed searchable forever

This created an incredible public archive of knowledge.

Stack Overflow became the default brain for developers

Quora became a place for long-form thinking

Reddit became a mix of expertise, opinions, and lived experiences

The key idea was simple:

If you had a question, someone else probably already asked it.

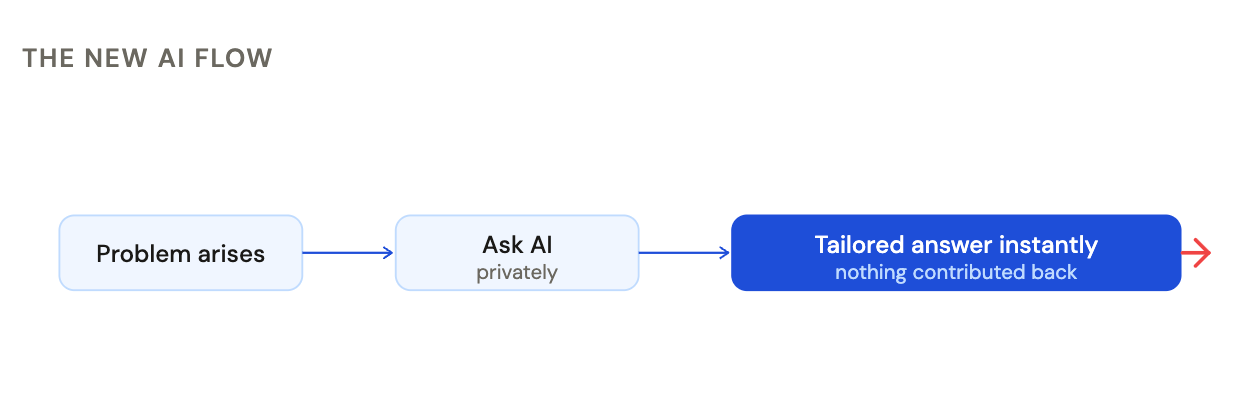

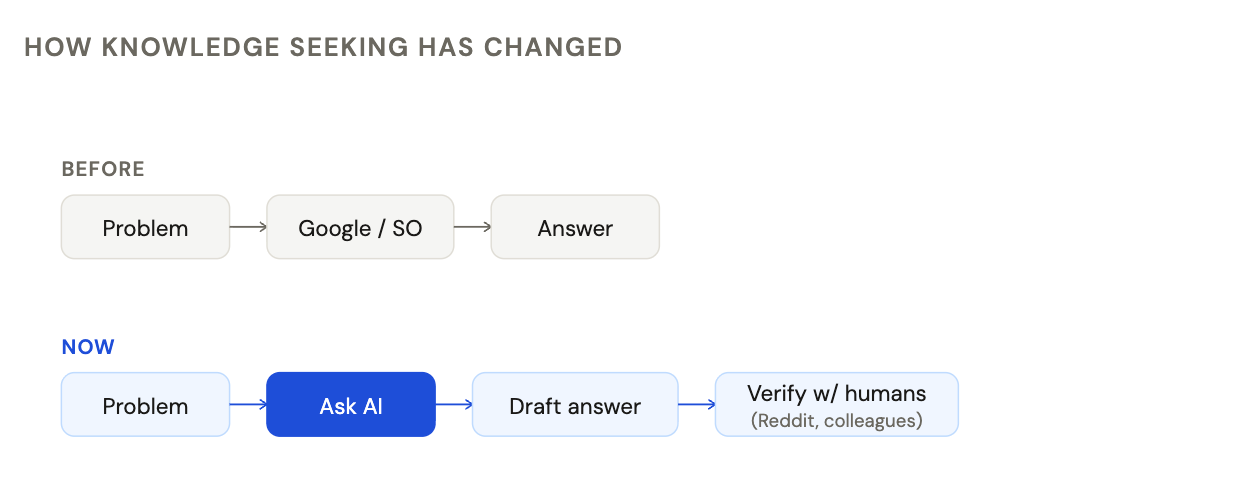

The New Model: Knowledge as an Instant Service

AI breaks this loop.

Now the flow looks like:

You have a problem

You ask AI privately

You get a tailored answer instantly

No waiting.

No posting.

No contributing back.

That small change has enormous second-order effects — not just for these platforms, but for the quality of knowledge the internet will hold five years from now.

The Contrarian Take

AI didn’t just disrupt these platforms.

It exposed that a large percentage of questions were low-value to begin with.

- Repetitive

- Already answered

- Easily automatable

That’s exactly why AI could replace them so quickly.

This isn’t just a technology shift.

It’s a filtering mechanism.

AI is removing the need for questions that never needed to be asked publicly in the first place.

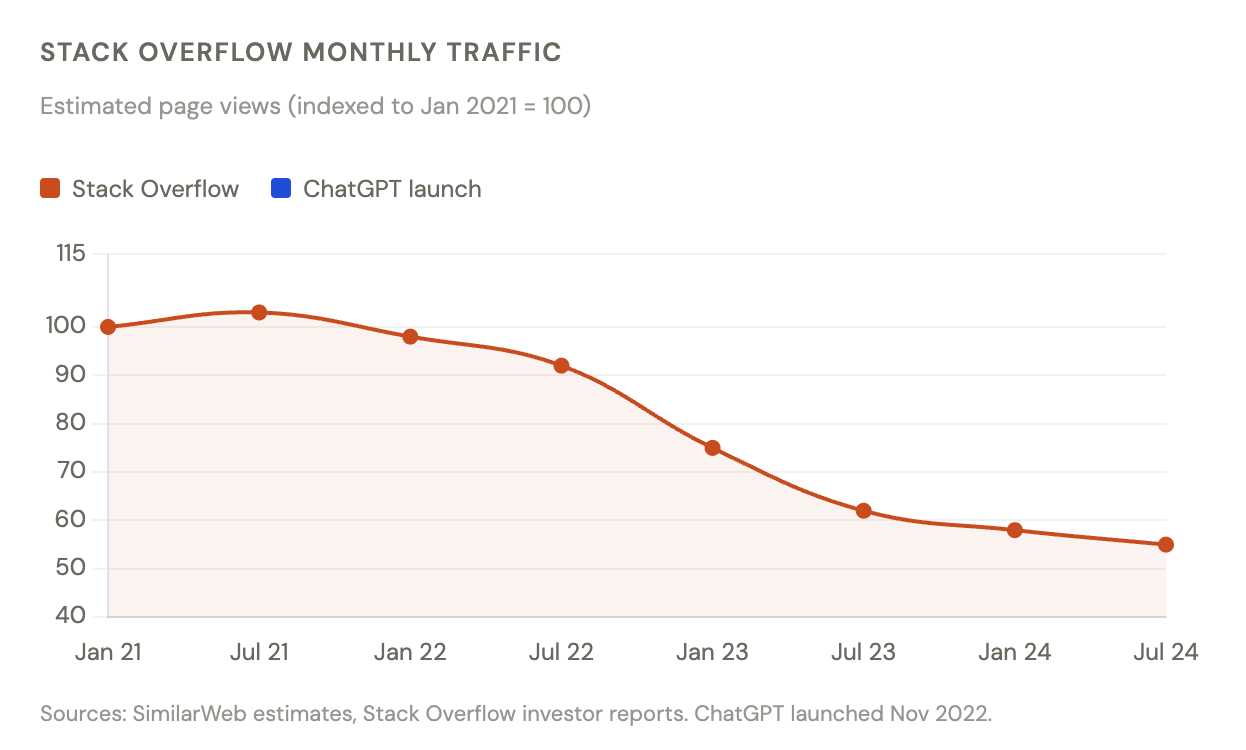

1. Stack Overflow: From First Stop to Backup Option

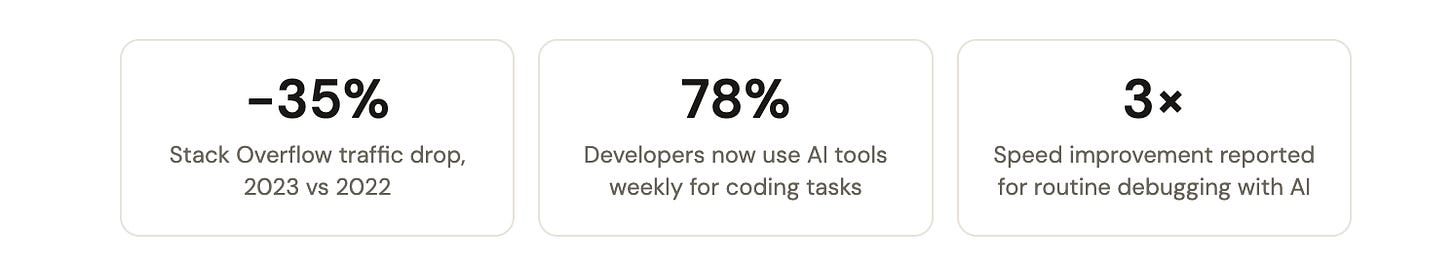

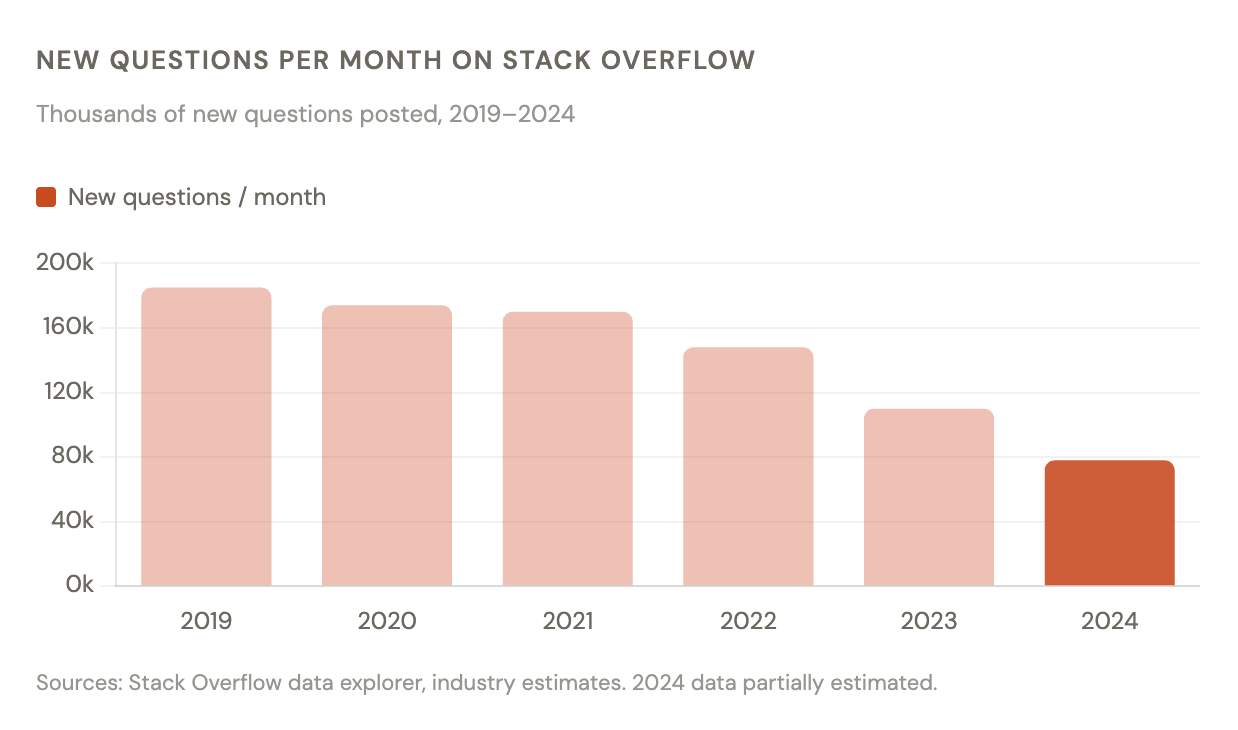

Stack Overflow is where the shift is most obvious.

Its core value was speed + accuracy for coding problems.

But AI is simply better at that use case.

A real example

I recently hit a Python issue:

“RuntimeError: This event loop is already running”

Earlier, I would:

Search Google

Open 3 Stack Overflow threads

Try different answers

Piece together a solution

~15–20 minutes

Now:

Paste error into ChatGPT

Get the exact fix + explanation

Done in under 30 seconds

I didn’t even think about opening Stack Overflow.

But there’s a catch.

AI feels right even when it’s wrong.

In many cases, it produces answers that are:

- Confident

- Clean

- Slightly incorrect

And because the experience is so smooth, it’s easy to trust it without verifying.

That’s a very different failure mode compared to Stack Overflow, where disagreement and discussion were visible.

What changed?

Stack Overflow answers are:

Static

Context-limited

Often outdated

AI answers are:

Context-aware

Interactive

Personalized to your code

That’s a fundamentally better experience.

There’s another uncomfortable truth here.

Even before AI, the system wasn’t perfect.

Research shows:

~58% of answers on Stack Overflow were already obsolete when posted

Only ~20% ever get updated

Meaning:

Even before AI, the system had cracks.

AI didn’t break it.

It just made those cracks visible.

It just exposed the fact that static, community-driven answers struggle to keep up with fast-moving ecosystems.

The result

Stack Overflow isn't dead. But it has quietly shifted from being the first stop to a fallback — a place for deep threads, edge cases, and historical context that AI can't yet replicate. For most day-to-day debugging? Developers aren't even opening the tab.

It’s becoming:

A fallback for edge cases and deep threads.

2. Quora: When AI Becomes the Content

Quora took a very different path. Instead of resisting AI, it embraced it — launching Poe and leaning into AI-generated answers. At first, this seems smart. More answers, more content, more engagement.

But something subtle broke.The “uncanny answer” problem

Try searching:

“How do I become a better software engineer?”

You’ll find answers that are:

Well-structured

Grammatically perfect

Logically correct

But…

They feel empty.

No real stories.

No scars.

No “this failed in production at 2 AM.”

Why this matters

The value of Quora was never just information.

It was:

Perspective from people who had actually done the thing.

AI can simulate knowledge.

It struggles to simulate lived experience.

And when too much content is AI-generated, the platform starts to feel like:

A content factory instead of a thinking space.

3. Reddit: Still Standing (For Now)

Reddit is holding up much better.

Because its core value isn’t answers.

It’s people.

I still go to Reddit, but not for things like:

“What is Kafka?”

“Explain microservices”

I go for:

“Did this architecture actually work in production?”

“What are the real downsides of this approach?”

“How bad is on-call in this company?”

A real example

I was recently deciding between:

Event-driven architecture

Synchronous APIs

AI gave me:

Clean pros/cons

Structured explanations

But Reddit gave me:

“This blew up at scale because…”

“Debugging this is a nightmare”

“We had to roll this back”

That’s not information.

That’s experience.

Why Reddit survives

Because people don’t just want answers.

They want:

Validation

Contradictions

War stories

Nuance

And that’s still very hard for AI to replicate.

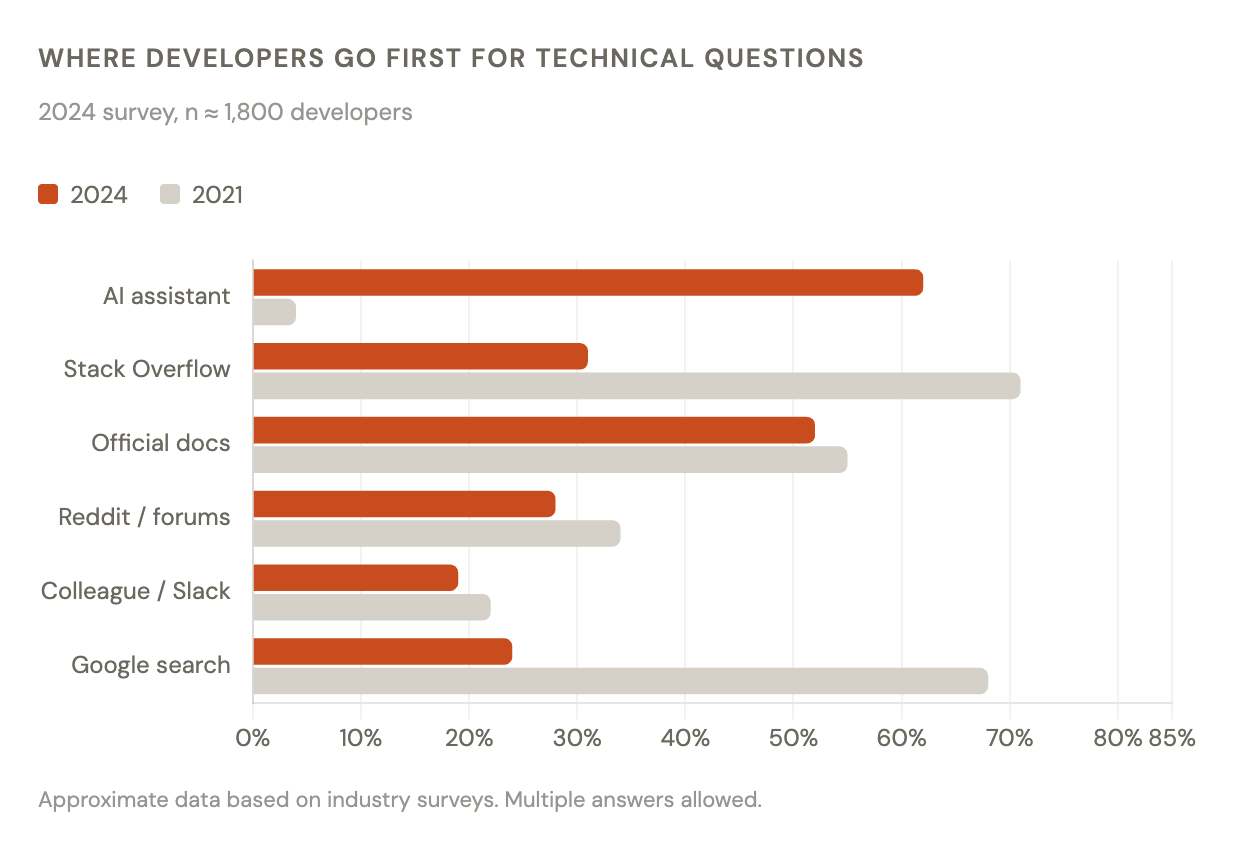

The Bigger Shift: From Discovery to Validation

The most important change is behavioral.

We’ve moved from:

“Ask the internet”

to:

“Ask AI, then verify with humans”

That’s a big deal.

Because it changes the role of communities:

Before: primary source of answers

Now: secondary layer for validation

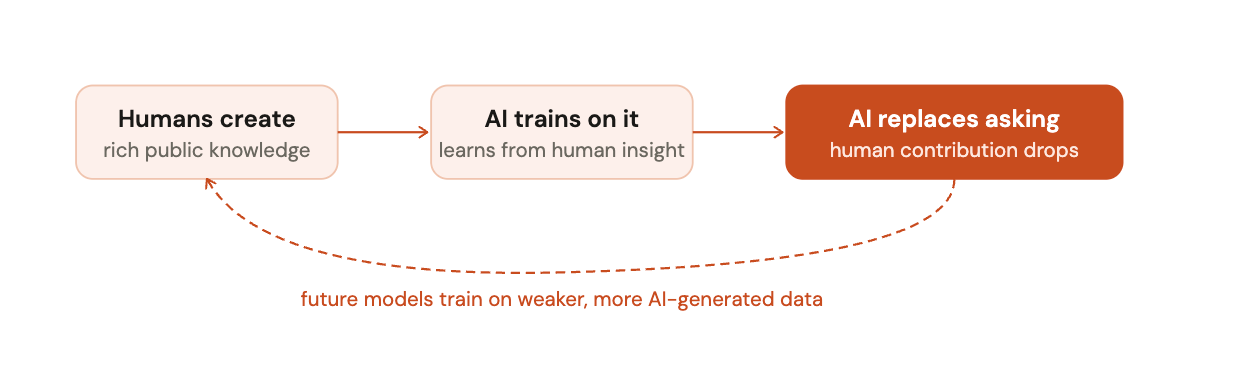

The Hidden Risk Nobody Talks About

There’s a second-order effect here.

AI models are trained on:

Stack Overflow

Reddit

Quora

But now:

Fewer people ask questions publicly

Fewer people write detailed answers

More content is AI-generated to fill the gap

So over time:

The quality of the source knowledge itself may decline.

It’s a strange loop:

We’re still early, but this feedback loop is worth paying attention to.What This Means for Engineers

If you’re a developer, this shift matters a lot.

Because it changes what skills are valuable.

What’s becoming commoditized

Syntax knowledge

API usage

Basic debugging

“How do I fix this error?”

AI handles these extremely well.

What’s becoming more valuable

System design decisions

Trade-offs

Debugging complex distributed systems

Understanding real-world constraints

Experience-backed judgment

In short:

Knowing what to do is less valuable than knowing why and when to do it.

In other words:

The value is shifting from answers → to judgment.

My Personal Workflow Now

This is how I work today:

Start with AI

Fast answers

Quick iterations

Unblocks me instantly

Move to community when needed

Edge cases

Conflicting opinions

Real-world validation

Trust experience over perfection

If an answer feels “too clean,” I double-check

Final Thought

AI didn’t kill these platforms.

It just removed friction.

And once friction disappears, behavior changes.

We ask fewer public questions

We rely less on shared knowledge

We move faster, but contribute less

The internet is shifting from:

A shared brain

to:

A private assistant

We’re moving from:

A shared brain → to → A private assistant

And that raises a deeper question:What happens to collective knowledge

when fewer people feel the need to contribute back at all?